Publications

(*=equal contribution)

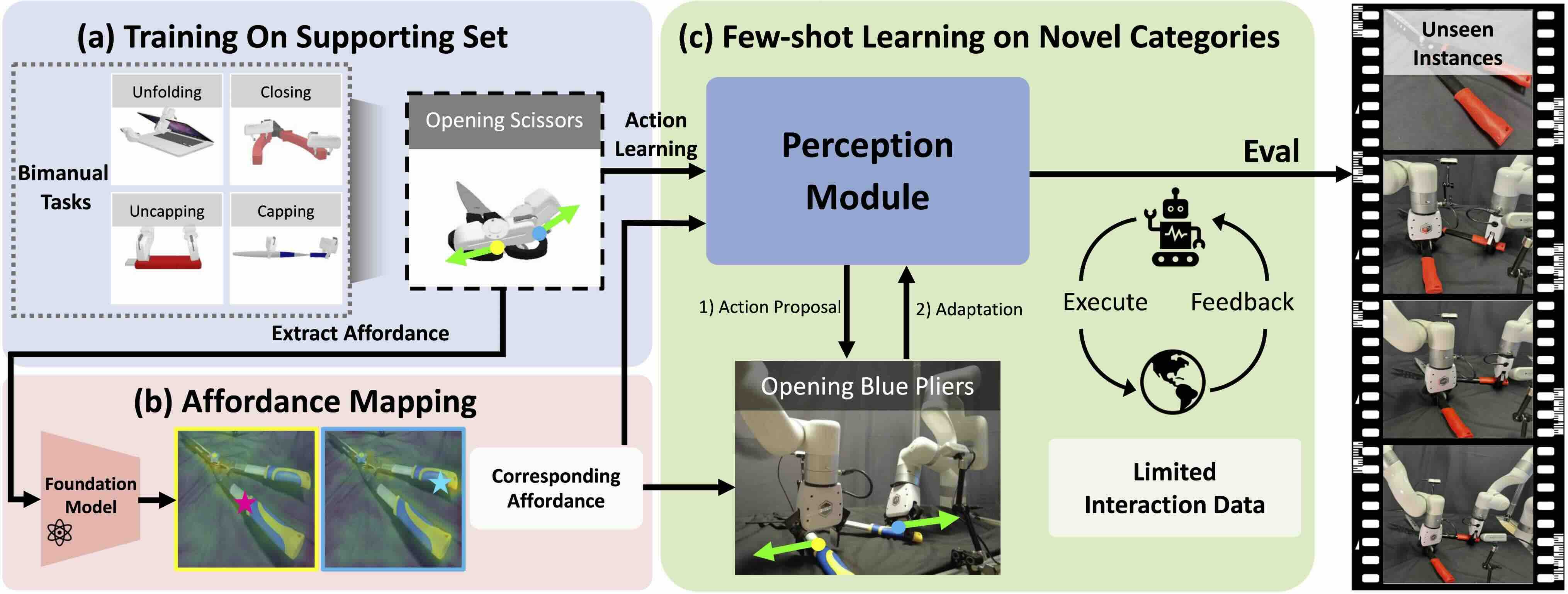

| Bi-Adapt: Few-shot Bimanual Adaptaion for Novel Categories of 3D Objects via Semantic CorrespondenceJinxian Zhou*, Ruihai Wu*, Yiwei Liu, Yiwen Hou, Xunzhe Zhou, Checheng Yu, Licheng Zhong, Lin ShaoInternational Conference on Robotics & Automation(ICRA), 2026 Project Page / arXiv / Code Bi-Adapt is a diffusion-based framework for efficient and generalizable bimanual manipulation across novel object categories and complex tasks. |

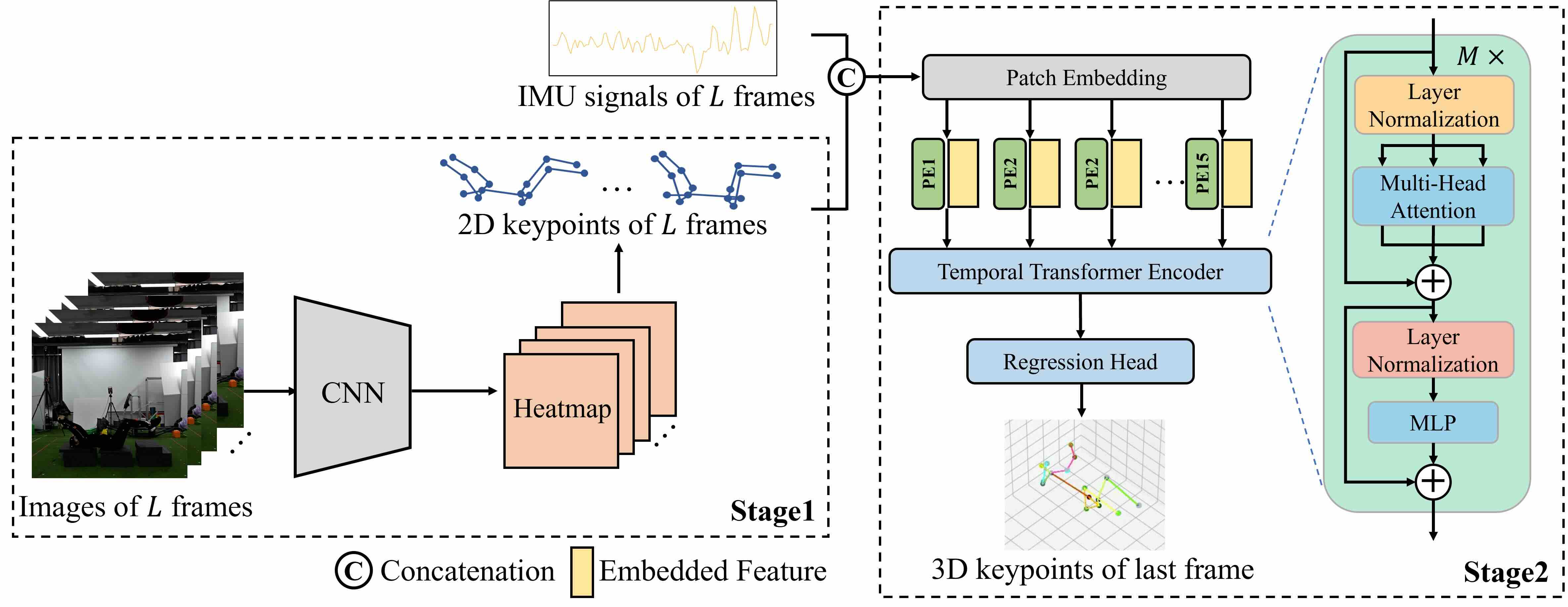

| Transformer-Based Full-Body Pose Estimation for Rehabilitation via RGB Camera and IMU FusionYuanshuo Tan, Xinyuan He, Guoxing Liu, Licheng Zhong, Huiming Pan, Kezhe Zhu, Peter B ShullInternational Conference on Body Sensor Networks (BSN), 2025, Oral Presentation Paper This work proposes a temporal transformer-based method that fuses images and IMU data to improve 3D human pose estimation for specialized rehabilitation exercises. |

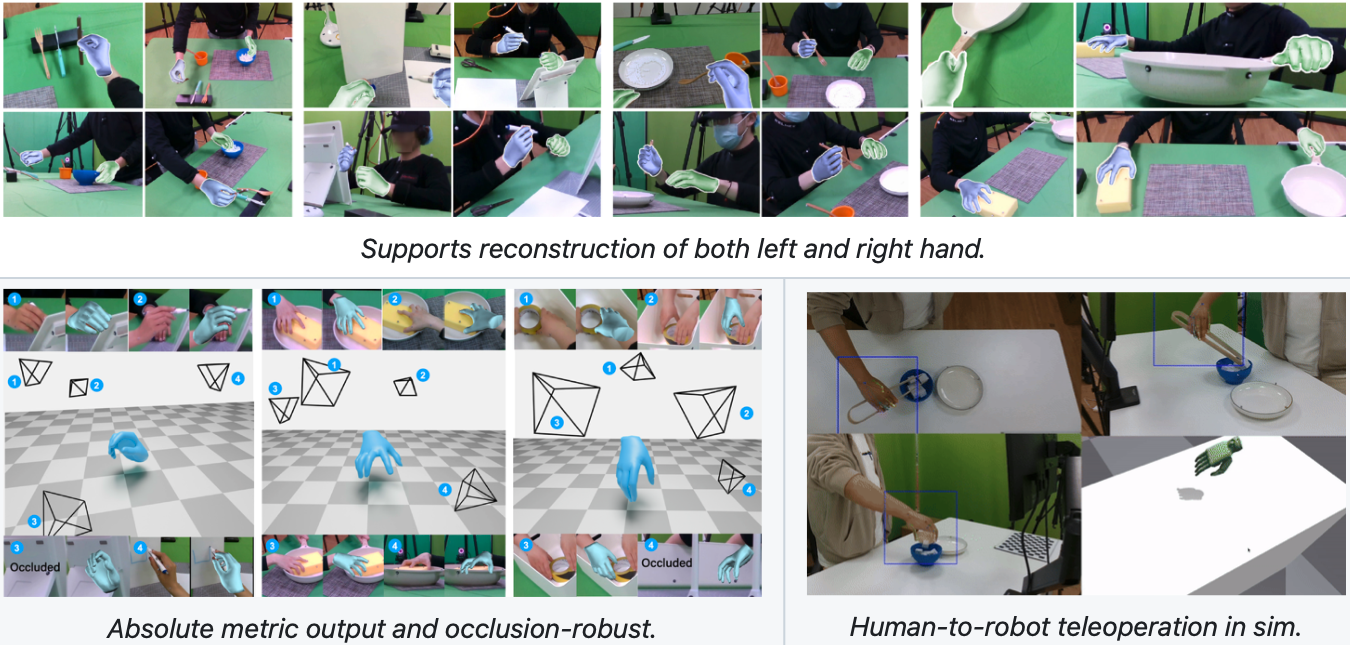

| Multi-view Hand Reconstruction with a Point-Embedded TransformerLixin Yang, Licheng Zhong, Pengxiang Zhu, Xinyu Zhan, Junxiao Kong, Jian Xu, Cewu LuTransactions on Pattern Analysis and Machine Intelligence (TPAMI), 2025 arXiv / Code  POEMv2 is a generalizable multi-view hand mesh reconstruction model which embeds a static basis point within the multi-view stereo space. |

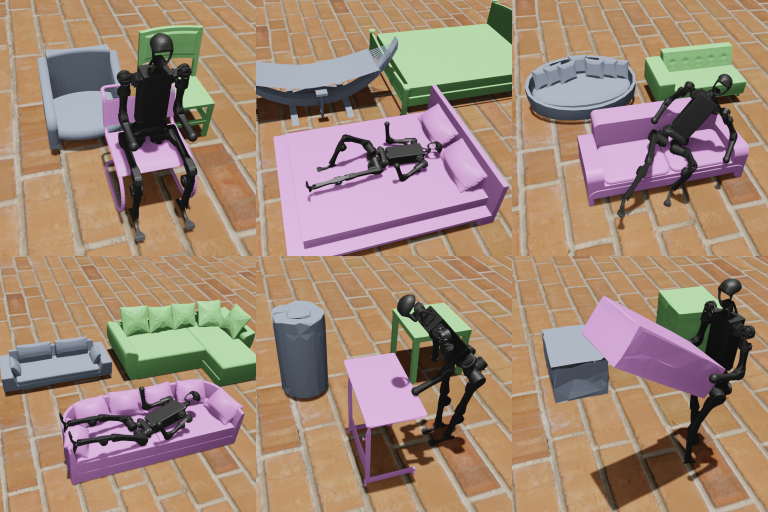

| Mimicking-Bench: A Benchmark for Generalizable Humanoid-Scene Interaction Learning via Human MimickingYun Liu*, Bowen Yang*, Licheng Zhong*, He Wang, Li Yiarxiv preprint CVPR Workshop on Humanoid Agents, 2025, Spotlight Project Page / arXiv A benchmark of Humanoid Scene Interaction. The first comprehensive benchmark for generalizable humanoid-scene interaction learning via human mimicking. Integrated a large-scale diverse human skill reference dataset with both synthetic and real-world human-scene interactions. Developed a general skill-learning paradigm and provide support for both pipeline-wise and modular evaluations. |

Reconstruction and Simulation of Elastic Objects with Spring-Mass 3D GaussiansLicheng Zhong, Hong-Xing "Koven" Yu, Jiajun Wu, Yunzhu LiEuropean Conference on Computer Vision (ECCV), 2024 Project Page / arXiv / Data / Code  Spring-Gaus reconstructs the appearance, geometry, and physical dynamics properties of elastic objects from video observations. Spring-Gaus enables future prediction and simulation under different initial states and environmental parameters. |

Color-NeuS: Reconstructing Neural Implicit Surfaces with ColorLicheng Zhong*, Lixin Yang*, Kailin Li, Haoyu Zhen, Mei Han, Cewu LuInternational Conference on 3D Vision (3DV), 2024 Project Page / Paper / arXiv / Data / Code  Color-NeuS focuses on mesh reconstruction with color. We remove view-dependent color while using a relighting network to maintain volume rendering performance. Mesh is extracted from the SDF network, and vertex color is derived from the global color network. We conceived a in hand object scanning task and gathered several videos for it to evaluate Color-NeuS. |

CHORD: Category-level in-Hand Object Reconstruction via Shape DeformationKailin Li*, Lixin Yang*, Haoyu Zhen, Zenan Lin, Xinyu Zhan, Licheng Zhong, Jian Xu, Kejian Wu, Cewu LuInternational Conference on Computer Vision (ICCV), 2023 Project Page / Paper / arXiv / PyBlend  We proposed a new method CHORD which exploits the categorical shape prior for reconstructing the shape of intra-class objects. In addition, we constructed a new dataset, COMIC, of category-level hand-object interaction. COMIC encompasses a diverse collection of object instances, materials, hand interactions, and viewing directions, as illustrated. |

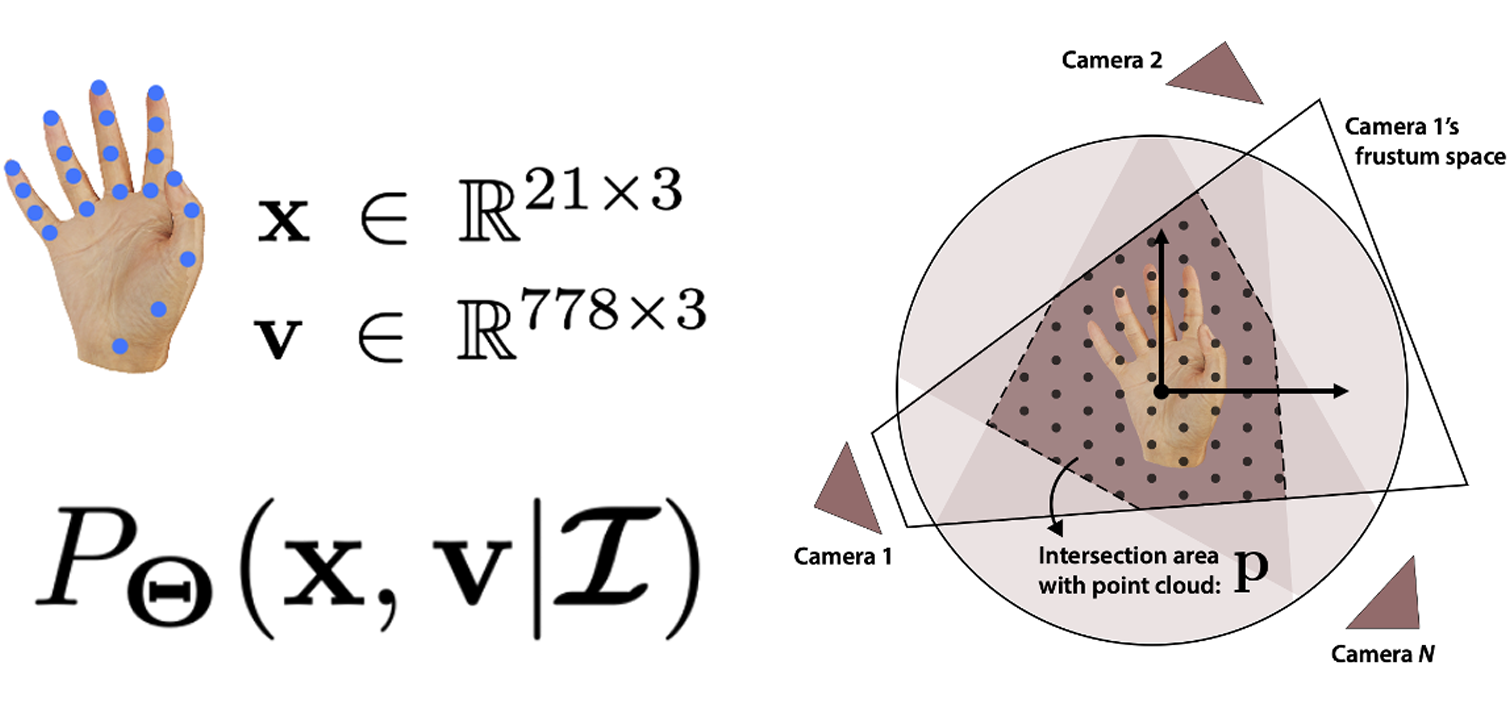

| POEM: Reconstructing Hand in a Point Embedded Multi-view StereoLixin Yang, Jian Xu, Licheng Zhong, Xinyu Zhan, Zhicheng Wang, Kejian Wu, Cewu LuComputer Vision and Pattern Recognition (CVPR), 2023 Paper / arXiv / Code  POEM (Point Embedded Multi-view) focuses on reconstructing an articulation body with "true scale" and "accurate pose" from a series of sparsely arranged camera views. In practice, we used the example of hand. POEM explores the power of points, using a cluster of (x, y, z) coordinates with natural positional encoding to find associations in multi-view stereo. |

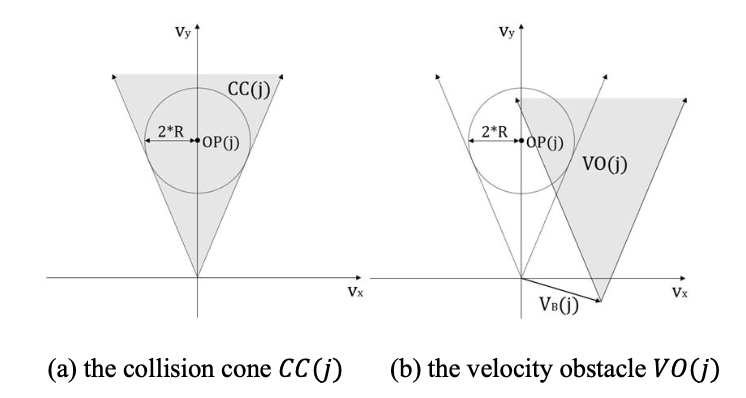

| Centralized and Decentralized Methods for Multi-robot Safe NavigationXinbei Wang*, Zexuan Yan*, Licheng Zhong*Machine Learning and Intelligent Systems Engineering (MLISE), 2022 Paper We simulated two centralized methods and two decentralized methods for multi-robot safe navigation in their respective environments. |